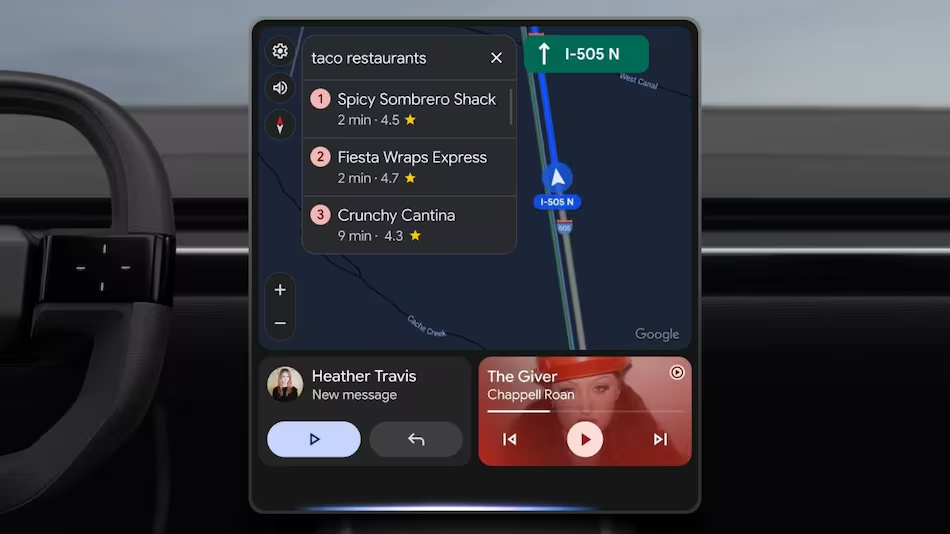

Google Begins Rolling Out Gemini Assistant for Android Auto

![Gemini starts rolling out on Android Auto with Live support [Gallery]](https://9to5google.com/wp-content/uploads/sites/4/2025/11/android-auto-gemini-live-1.jpg?quality=82&strip=all&w=1600)

Google has reportedly begun rolling out its Gemini assistant to Android Auto, marking a significant step in integrating AI-driven functionality into the in-car experience. Over the past few days, several users have spotted Gemini appearing in their Android Auto interfaces, suggesting that the Mountain View-based company is gradually introducing the assistant. While it remains unclear whether this rollout is part of a beta program or intended for wider public access, the development follows Google’s initial announcement of the feature at Google I/O in May.

According to a 9to5Google report, Gemini has been observed on Android Auto 15.6 when connected to the Google Pixel 10 Pro XL, and on Android Auto 15.7 when paired with the Samsung Galaxy Z Fold 7. Both of these Android Auto versions are currently in beta, indicating that Google may be using the beta environment to test Gemini’s performance and compatibility before a full-scale launch.

At this stage, there is no official word from Google regarding whether the rollout is exclusively a beta test or the beginning of a broader deployment. Users encountering Gemini in Android Auto might simply be part of an initial controlled rollout, with more devices and regions expected to gain access gradually. This phased approach allows Google to monitor performance, gather user feedback, and make adjustments before releasing the feature globally.

Despite the uncertainty, the introduction of Gemini in Android Auto signals Google’s ongoing push to bring AI assistants deeper into everyday workflows, including in-car navigation and hands-free interaction. By leveraging Gemini’s capabilities, drivers could potentially access smarter route suggestions, contextual reminders, and natural language queries, enhancing both convenience and safety for Android Auto users in the near future.