Physics-Inspired Model Reveals How Deep Neural Networks Learn Features

Spring-block physics provides a novel perspective on how deep neural networks learn and develop features layer by layer.

Researchers are using spring-block physics to better understand how deep neural networks (DNNs) learn and organize features across layers. By drawing parallels between DNN training and the behavior of spring-and-friction systems, they aim to illuminate the dynamics of feature extraction and memorization. In this analogy, the network’s data is compressed through layers, analogous to a spring being compressed, with friction representing the nonlinearity in the network. Instead of relying on noise as a smoothing mechanism, the model uses continuous spacing to propagate learning efficiently across multiple layers.

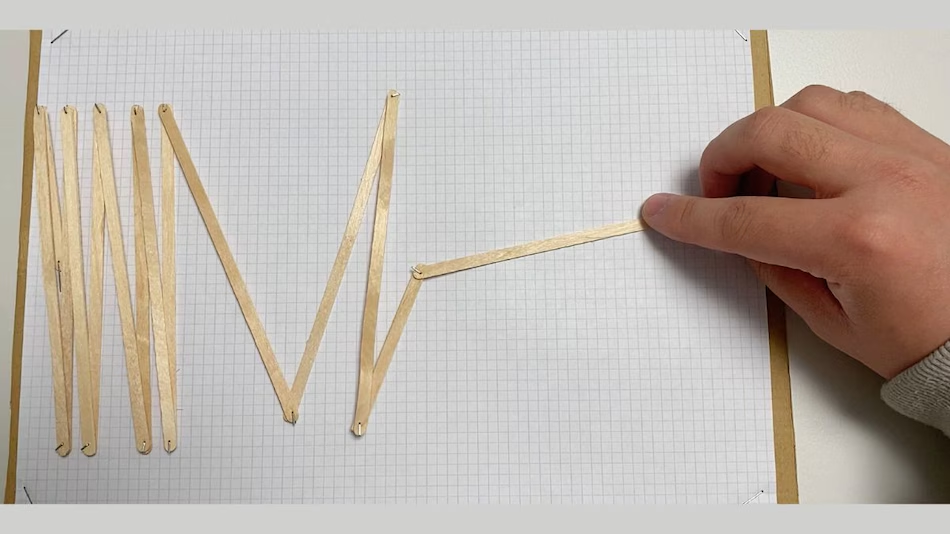

Spring-and-Friction Machines

The team developed mechanical spring-and-friction devices to simulate the interaction between springs and friction. These machines mimic the way features are learned in neural networks: the compression of a spring represents the dimensionality reduction in a layer, while friction reflects the network’s nonlinearity. By studying how these mechanical systems evolve, scientists can gain insights into how DNNs separate and organize data throughout training.

Law of Data Separation

According to Ivan Dokmanić, lead author of the study published in Physical Review Letters, this analogy revealed a “law of data separation”. By analyzing spring-block dynamics, researchers discovered curves that quantify how well a network can separate previously unseen datasets after training on a different set. This provides a physics-based blueprint for understanding feature learning and improving generalization in neural networks.

Implications for Smarter Neural Networks

This approach offers a fresh, physics-inspired perspective on DNN training, highlighting the interplay between dimensional compression and nonlinearity. By leveraging these insights, engineers could design more efficient networks that learn features more effectively, separate data more accurately, and generalize better to new datasets, potentially advancing AI capabilities across applications.