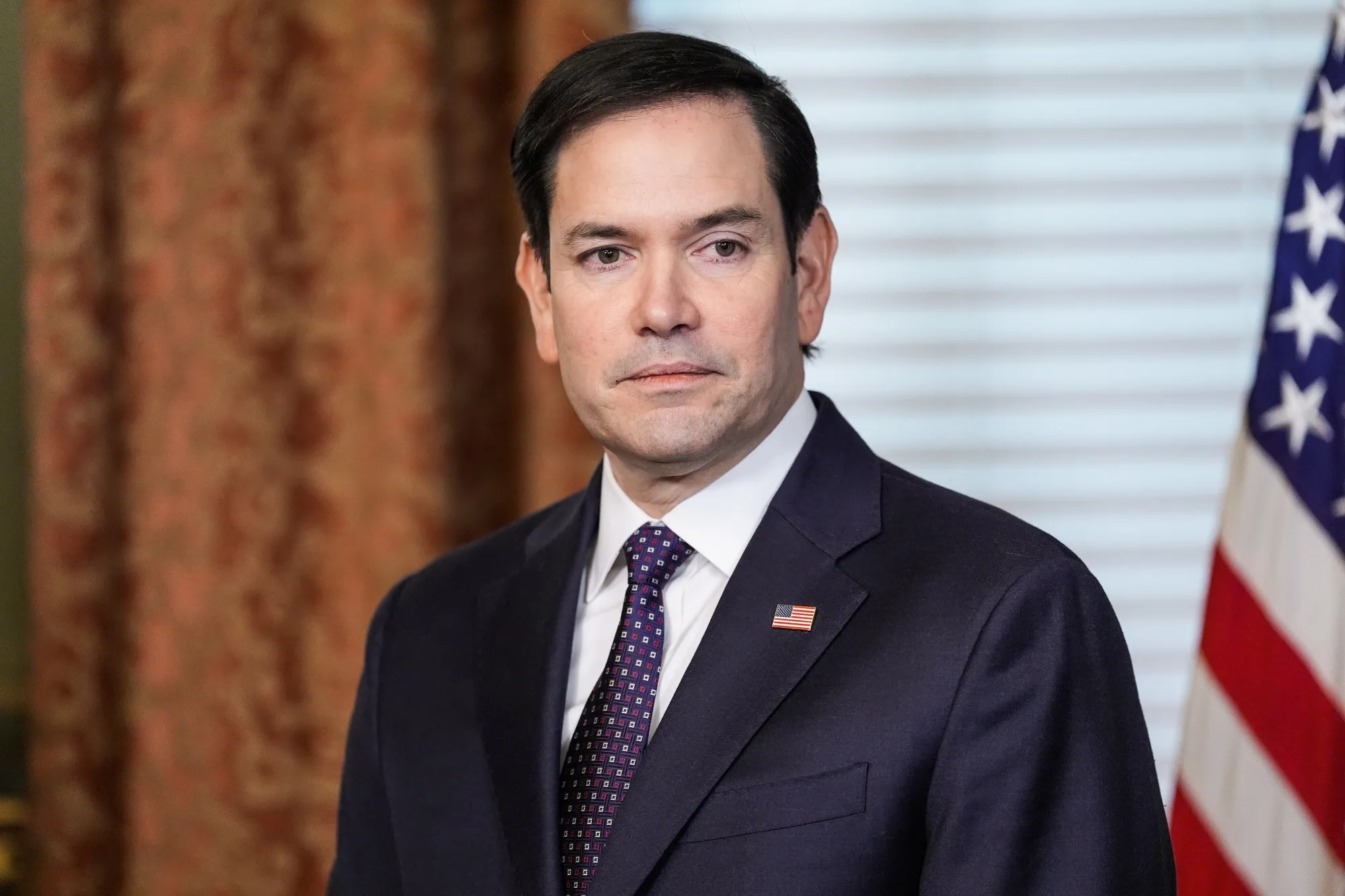

AI Impersonator Posed as Marco Rubio, Contacted Foreign Ministers in Sophisticated Deception

An individual using AI-generated voice technology impersonated U.S. Secretary of State Marco Rubio in June, contacting at least three foreign ministers, two U.S. officials, a governor, and a member of Congress, according to a classified State Department cable seen by Reuters.

The impersonator reached out through the Signal messaging app, sending voice messages and at least one text inviting recipients to connect further. The AI-driven impersonation is suspected to be part of a broader attempt to extract information or access government accounts, the July 3 cable warned.

“The actor likely aimed to manipulate targeted individuals using AI-generated text and voice messages,” it stated.

The State Department has launched an investigation into the incident. A senior official, speaking anonymously, confirmed the government is taking steps to improve cybersecurity protocols and mitigate future threats. While no immediate cyber breach was reported, the department noted that if individuals engaged with the impersonator, sensitive information could have been compromised.

This follows a string of recent digital security incidents. In a separate situation, President Donald Trump’s former National Security Adviser Mike Waltz accidentally added a journalist to a Signal group chat, where classified discussions on military operations in Yemen were exposed.

The State Department is now instructing diplomatic and consular staff to warn external partners about impersonation tactics and fake accounts. While the cable didn’t disclose which foreign ministers were contacted, it linked this attempt to earlier AI-related phishing campaigns.

Russia Connection Suspected in Prior Campaign

The cable also referenced a April impersonation campaign attributed to a Russia-linked hacker, who mimicked a @state.gov email address and replicated branding from the Bureau of Diplomatic Technology. That campaign targeted think tanks, Eastern European activists, and former State Department officials, showing “extensive knowledge of internal naming conventions and documentation.”

That operation was publicly tied to the Russian Foreign Intelligence Service by cybersecurity experts.

FBI Confirms Broader AI Threat

In May, the FBI issued a warning about malicious actors using AI-generated voice and text messages to impersonate senior U.S. officials. These schemes aim to gain access to personal or professional accounts, and potentially to manipulate additional targets once access is gained.

While the FBI declined to comment on the Rubio impersonation, it has previously said such tactics can be used to elicit sensitive information or financial transfers under false pretenses.

The impersonation episode also follows recent reporting from the Wall Street Journal that White House Chief of Staff Susie Wiles was also the subject of a similar impersonation attempt, now under federal investigation.

As AI-generated content becomes more convincing and accessible, national security experts warn that the threat of deepfake diplomacy and synthetic political manipulation is rapidly escalating.