Samsung Begins Shipping HBM4 Chips to Boost AI Position

Samsung Electronics said it has started shipping its most advanced high-bandwidth memory chips, HBM4, as it seeks to close the gap with rivals in supplying critical components for artificial intelligence accelerators.

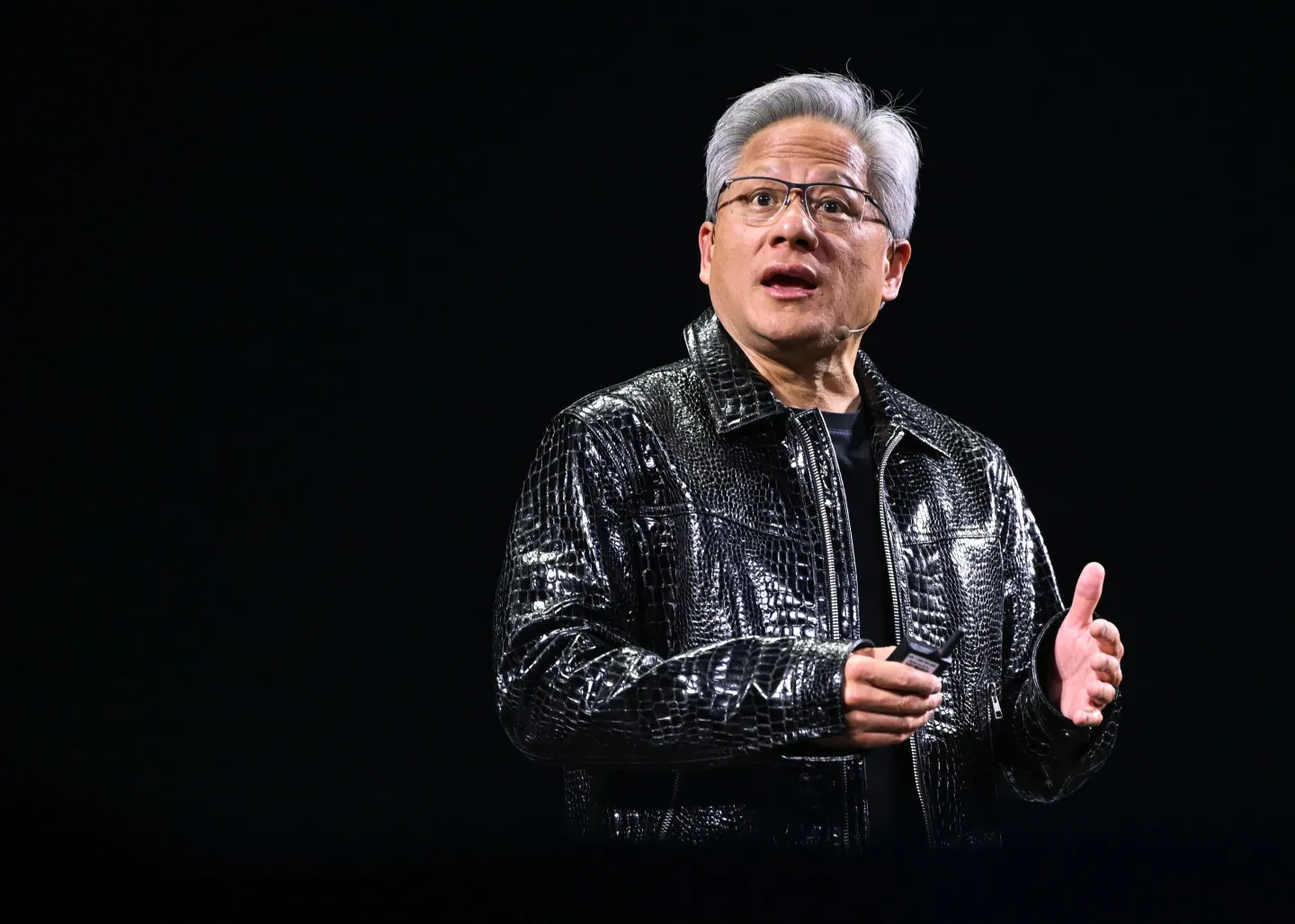

Demand for high-performance memory has surged amid the global buildout of AI data centers. HBM chips are essential for feeding large volumes of data into AI accelerators, including those developed by Nvidia. Samsung has previously trailed competitors such as SK Hynix in delivering earlier-generation HBM products.

Samsung said its HBM4 chips deliver a consistent processing speed of 11.7 gigabits per second, a 22% improvement over its HBM3E predecessor, with peak speeds reaching 13 Gbps to address growing data bottlenecks. The company added that it plans to provide samples of next-generation HBM4E chips in the second half of the year.

Shares of Samsung rose following the announcement, reflecting investor optimism about its efforts to regain momentum in the competitive AI memory market. SK Hynix, which has maintained a leading position in HBM production, has said it aims to preserve its strong market share as competition intensifies. Meanwhile, U.S.-based Micron Technology has also begun high-volume production and customer shipments of HBM4.

The rollout underscores intensifying competition among memory manufacturers as AI infrastructure expansion continues to drive demand for faster, more efficient chip technologies.