Google Integrates SandboxAQ’s Quantitative AI Models into Cloud Services

Google Cloud has expanded its offerings by integrating SandboxAQ’s large quantitative models (LQMs), designed to process complex numerical data and perform advanced statistical analysis. This move highlights the growing interest of cloud providers in AI technology as a key driver of future growth.

Key Points:

- Partnership with SandboxAQ: Quantum startup SandboxAQ has announced that its LQMs will be available on Google Cloud, making it easier for businesses to use and deploy these models. SandboxAQ, a spin-off of Google-parent Alphabet, is seeking to expand its reach and customer base through this collaboration.

- Capabilities of LQMs: The models are designed to handle large-scale datasets and perform intricate calculations, ideal for creating advanced financial models, automating trading strategies, and addressing complex business problems. These models are particularly useful in industries like life sciences, financial services, and navigation.

- Quantum AI Synergy: According to SandboxAQ CEO Jack Hidary, quantitative AI is essential for many sectors of the economy, especially where mathematical and quantitative relationships are fundamental. He emphasized the complementary nature of quantitative AI and language models in solving complex challenges.

- SandboxAQ’s Growth: In the previous month, SandboxAQ raised $300 million in funding, which boosted its valuation to $5.6 billion. The company is backed by prominent investors including Fred Alger Management, T. Rowe Price, and Breyer Capital.

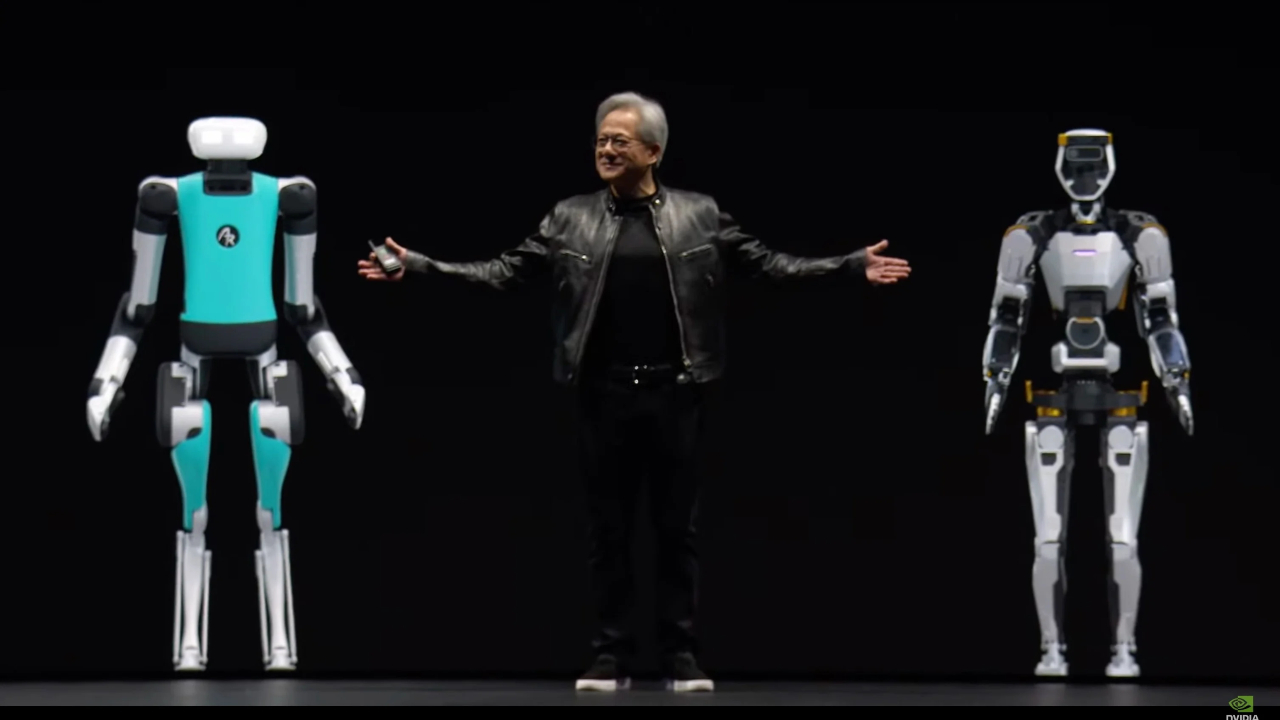

- Broader Industry Impacts: Google’s push into quantum computing, including progress on new quantum chips, is seen as part of its broader strategy to lead in this emerging field. Competitors such as Microsoft and Nvidia have also been active in exploring quantum computing, although practical applications are still seen as years away.