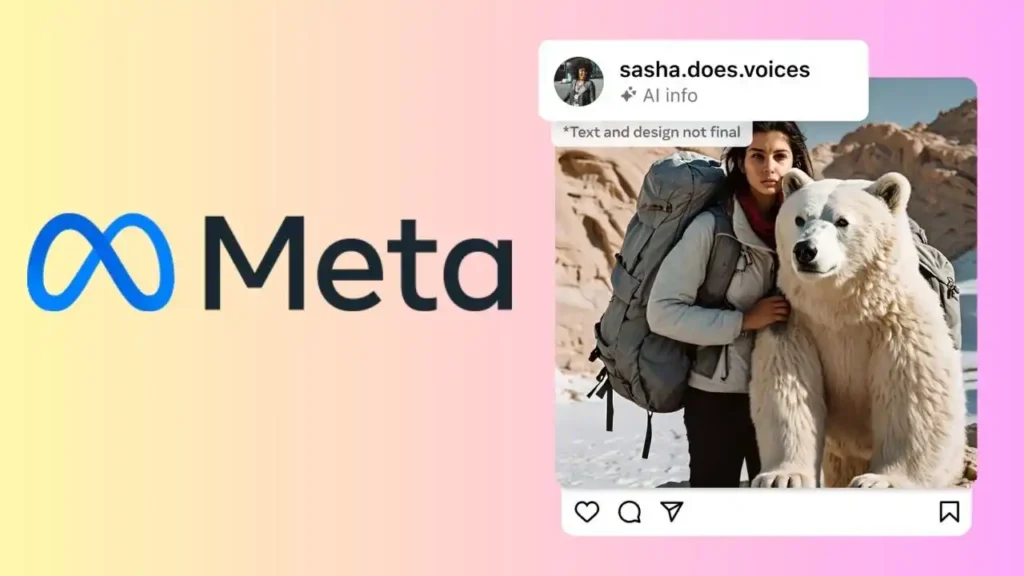

Meta’s Initiative: Labeling AI-Generated Images Across Facebook, Instagram, and Threads

Meta’s Transparency Effort: Users Can Now Disclose AI-Generated Video or Audio Shares

Meta has unveiled plans to introduce labels for artificial intelligence (AI)-generated images across its entire ecosystem, encompassing platforms like Facebook, Threads, and Instagram. This announcement, made on February 6, follows a call from Meta’s oversight board to revise its policy regarding AI-generated content, prompted by concerns raised over a digitally altered video involving US President Joe Biden. While Meta already labels photorealistic images produced by its own AI models, it now aims to collaborate with external entities to ensure that all AI-generated images shared on its platforms are appropriately labeled.

Nick Clegg, Meta’s President of Global Affairs, emphasized the importance of labeling AI-generated content as a protective measure against misinformation in a post on Meta’s newsroom on Tuesday. He stated, “We’ve been working with industry partners to align on common technical standards that signal when a piece of content has been created using AI.”

Additionally, Meta disclosed its ongoing efforts to label images sourced from notable providers such as Google, OpenAI, Microsoft, Adobe, Midjourney, and Shutterstock. Images generated by Meta’s proprietary AI models are currently denoted as “Imagined with AI.”

To correctly identify AI-generated images, detection tools require a common identifier in all such images. Many firms working with AI have begun adding invisible watermarks and embedding information in the metadata of the images as a way to make it apparent that it was not created or captured by humans. Meta said it was able to detect AI images from the highlighted companies as it follows the industry-approved technical standards.

But there are a few issues with this. First, not every AI image generator uses such tools to make it apparent that the images are not real. Second, Meta has noticed that there are ways to strip out the invisible watermark. For this, the company has revealed that it is working with industry partners to create a unified technology for watermarking that is not easily removable. Last year, Meta’s AI research wing, Fundamental AI Research (FAIR), announced that it was developing a watermarking mechanism called Stable Signature that embeds the marker directly into the image generation process. Google’s DeepMind has also released a similar tool called SynthID.

But this just covers the images. AI-generated audio and videos have also become commonplace today. Addressing this, Meta acknowledged that a similar detection technology for audio and video has not been created yet, although development is in the works. Till a way to automatically detect and identify such content emerges, the tech giant has added a feature for users on its platform to disclose when they share AI-generated video or audio. Once disclosed, the platform will add a label to it.

Clegg also highlighted that in the event that people do not disclose such content, and Meta finds out that it was digitally altered or created, it may apply penalties to the user. Further, if the shared content is of high-risk nature and can deceive the public on matters of importance, it might add an even more prominent label to help users gain context.